Airflow is designed under the principle of "configuration as code". Airflow's extensible Python framework allows developers to build workflows connecting with virtually any technology. The project joined the Apache Software Foundation’s Incubator program in March 2016, and the Foundation announced Apache Airflow as a Top-Level Project in January 2019.Īirflow is written in Python, and workflows are created via Python scripts. Airflow was open-source from the very first commit and was officially brought under the Airbnb GitHub and announced in June 2015. Airbnb created Airflow to manage increasingly complex workflows and to programmatically author and schedule them. It was developed by Maxime Beauchemin at Airbnb in October 2014. Let's execute Step 4 to register the datahub connection with Airflow.Apache Airflow is an open-source workflow management platform designed for data engineering pipelines. Looks like we forgot to register the connection with Airflow! In this case, clearly the connection datahub-rest has not been registered. Most issues are related to connectivity between Airflow and DataHub.

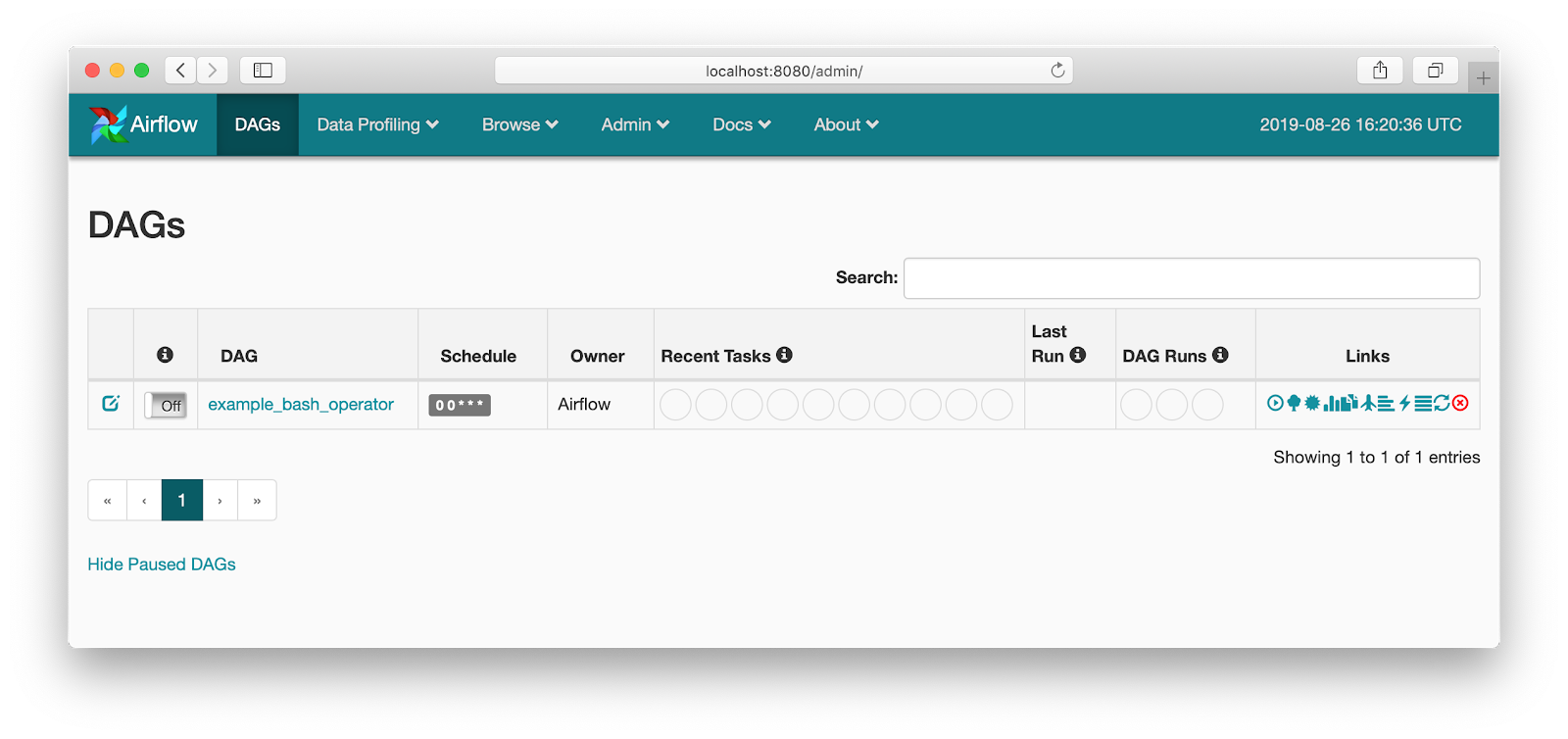

Unpause the sample DAG to use it.Īfter the DAG runs successfully, go over to your DataHub instance to see the Pipeline and navigate its lineage. Navigate the Airflow UI to find the sample Airflow dag we just brought inīy default, Airflow loads all DAG-s in paused status.

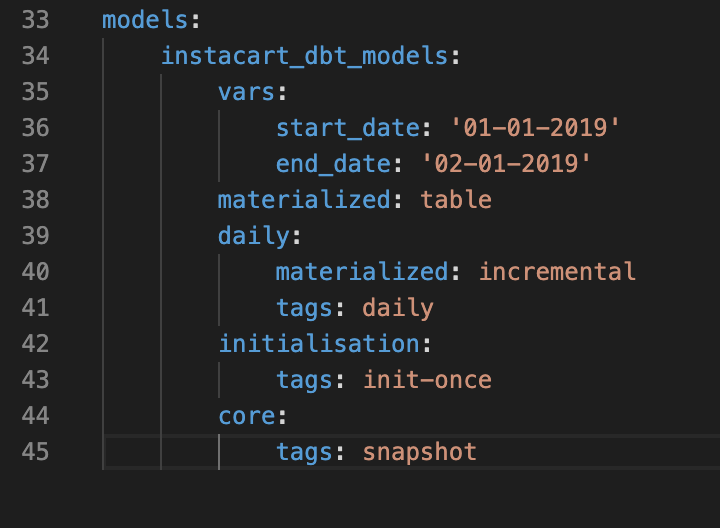

Note: This is what requires Airflow to be able to connect to datahub-gms the host (this is the container running datahub-gms image) and this is why we needed to connect the Airflow containers to the datahub_network using our custom docker-compose file.command inside that container to register the datahub_rest connection type and connect it to the datahub-gms host on port 8080. Find the container running airflow webserver: docker ps | grep webserver | cut -d " " -f 1.(Look for the AIRFLOW_LINEAGE_BACKEND and AIRFLOW_LINEAGE_DATAHUB_KWARGS variables)įirst you need to initialize airflow in order to create initial database tables and the initial airflow user.Ĭontainer airflow_deploy_airflow-scheduler_1 Started 15.7sĪttaching to airflow-init_1, airflow-scheduler_1, airflow-webserver_1, airflow-worker_1, flower_1, postgres_1, redis_1Īirflow-init_1 | DB: | INFO - * Running on (Press CTRL+C to quit) This docker-compose file also sets up the ENV variables to configure Airflow's Lineage Backend to talk to DataHub.Modifies the port-forwarding to map the Airflow Webserver port 8080 to port 58080 on the localhost (to avoid conflicts with DataHub metadata-service, which is mapped to 8080 by default).the Airflow containers can talk to the DataHub containers through the datahub_network bridge interface.This docker-compose file sets up the networking so that.It includes the latest acryl-datahub pip package installed by default so you don't need to install it yourself. The Airflow image in this docker compose file extends the base Apache Airflow docker image and is published here.This docker-compose file is derived from the official Airflow docker-compose file but makes a few critical changes to make interoperability with DataHub seamless.What is different between this docker-compose file and the official Apache Airflow docker compose file?

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed